Stanford Smallville Virtual Town Part 3: Agent Architecture

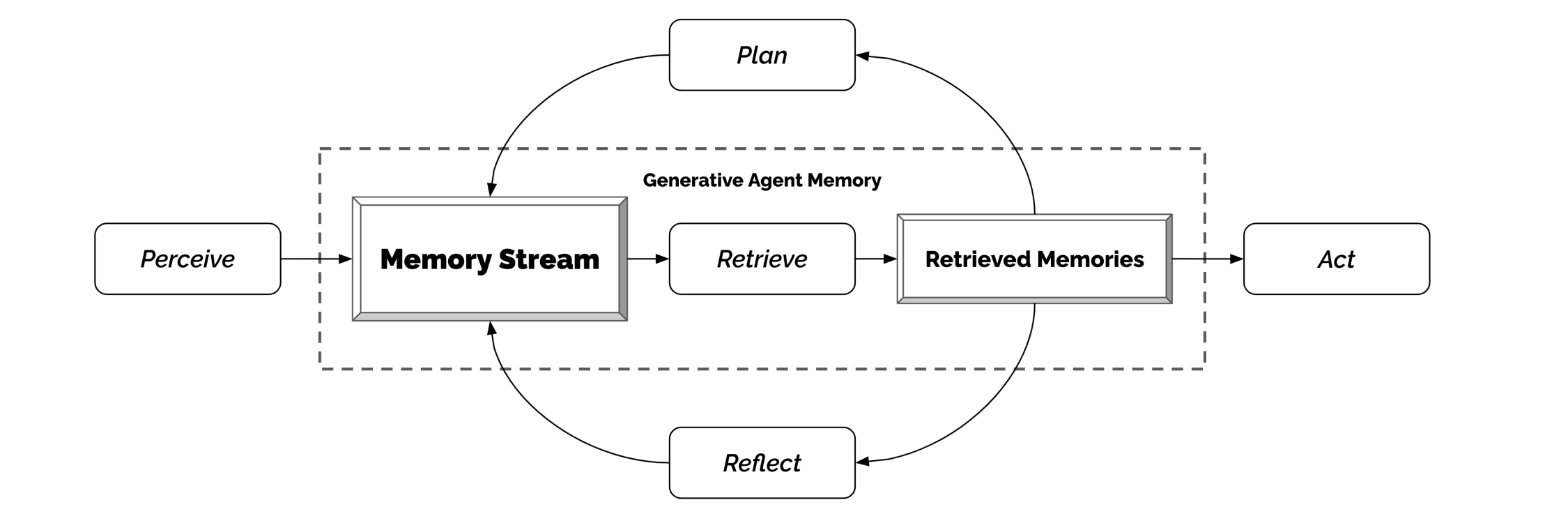

This third part of the notes corresponds to the “GENERATIVE AGENT ARCHITECTURE” section of the paper “Generative Agents: Interactive Simulacra of Human Behavior.” It focuses on the three core mechanisms of the agent architecture: Memory Stream, Reflection, and Planning.

The figure below shows the core of the architecture is the Memory Stream, which provides a comprehensive record of the agent’s experiences.

source: figure 5 from https://arxiv.org/pdf/2304.03442

source: figure 5 from https://arxiv.org/pdf/2304.03442

1. Memory and Retrieval

- The units within the Memory Stream are Memory Objects, containing:

- Natural language description

- Creation timestamp

- Most recent access timestamp

- Type: The most basic element is an “Observation,” covering the agent’s own actions or perceived behaviors of others.

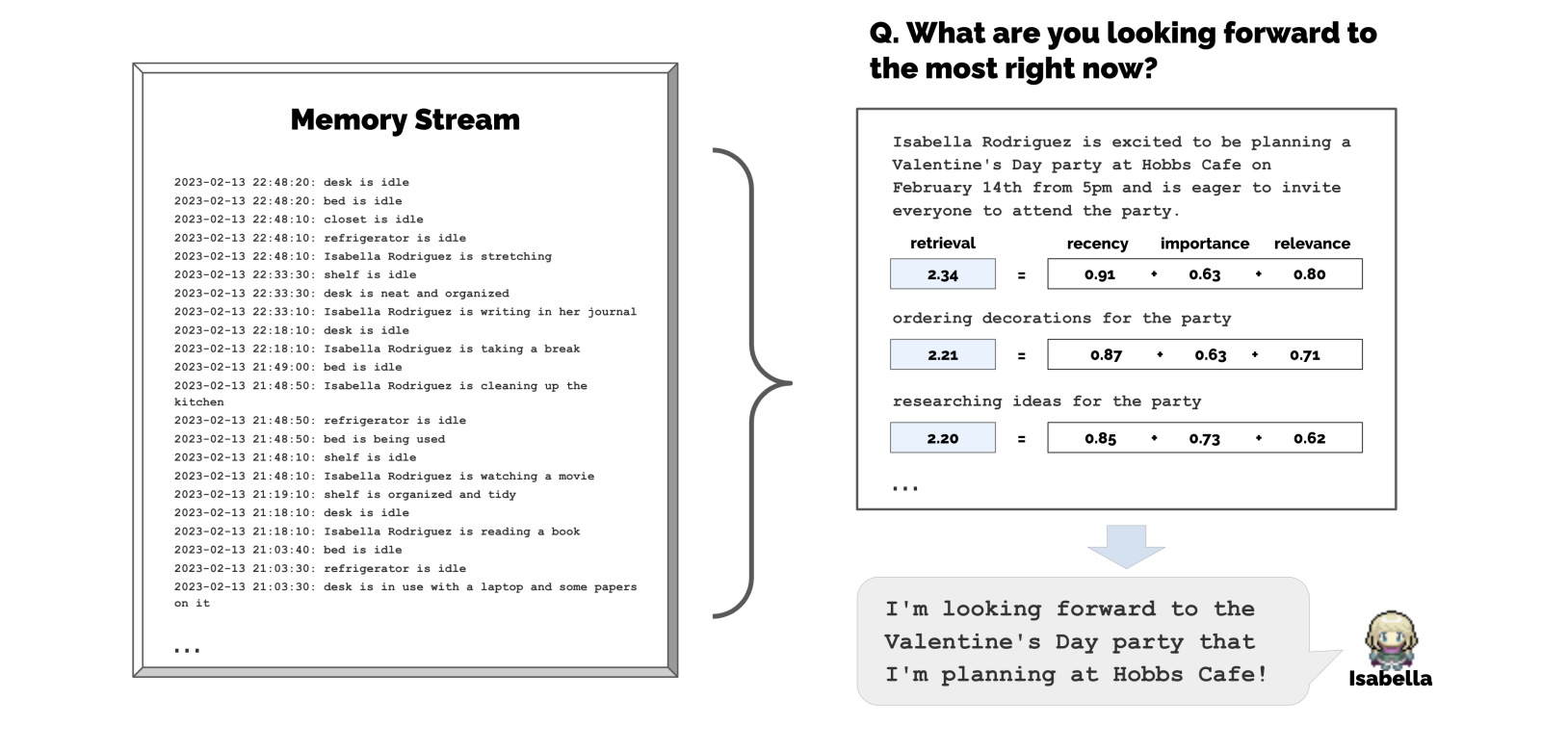

- Challenge: Passing all memories into the LLM prompt for every action would exceed context window limits and often include irrelevant information.

- Example: Asking Isabella what she has been working on lately might result in a vague answer if provided with all her memories. However, if the retrieval is narrowed to event-related memories, she will mention the Valentine’s Day party.

- Solution: A retrieval function that extracts the most relevant memories based on the agent’s current state. It involves three elements:

- Recency: Scores decay exponentially over time (Exponential decay function, decay factor 0.995). Recent events are more vivid and prioritized.

- Importance: An LLM assigns a score for how important or “poignant” a memory is. For instance, cleaning a room might score 2, while asking a crush out on a date might score 8.

- Relevance: Evaluates semantic similarity (via cosine similarity of embedding vectors) between the memory and the current situation or query.

- Formula: \(score = \alpha_{recency} \cdot recency + \alpha_{importance} \cdot importance + \alpha_{relevance} \cdot relevance\)

- Normalization: These three scores are normalized to a $[0, 1]$ range using Min-max scaling before being summed.

- Weights: In the paper’s implementation, all weights ($\alpha$) are set to $1$.

source: figure 6 from https://arxiv.org/pdf/2304.03442

source: figure 6 from https://arxiv.org/pdf/2304.03442

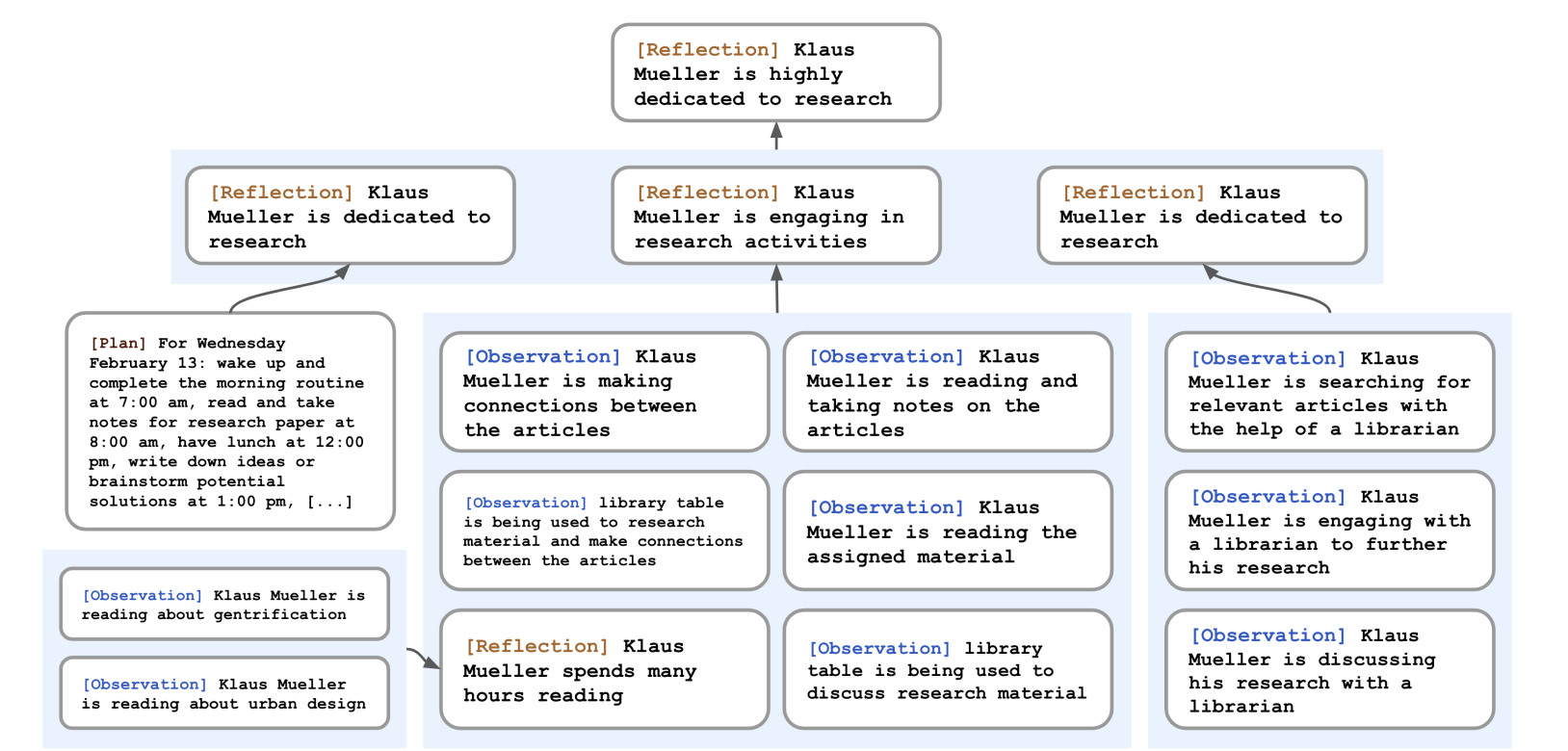

2. Reflection

- Challenge: Raw observations are too fragmented for generalization or reasoning about deeper relationships.

- Example: Klaus might choose a neighbor he sees most often for coffee instead of choosing Maria, whose interests better align with his own.

- Trigger: Occurs when the sum of the Importance scores of all new memories since the last reflection exceeds 150 (roughly 2-3 times per day).

- Four-Step Process:

- Query Formulation: The LLM identifies 3 high-level questions based on the last 100 entries.

- Retrieval: Uses these questions to query the memory stream (including previous reflections).

- Inquiry/Reasoning: The LLM extracts insights from the retrieved results and cites specific memory numbers as evidence.

- Storage: These abstract insights are stored back in the memory stream.

- Structure: Reflection Tree

- Leaf nodes: Basic observations (reading a book, having coffee).

- Non-leaf nodes: Abstract thoughts (enjoys research, has a close relationship with someone). The higher the level, the more abstract the thought.

source: figure 7 from https://arxiv.org/pdf/2304.03442

source: figure 7 from https://arxiv.org/pdf/2304.03442

3. Planning and Reacting

- Challenge: While agents can react to events, it is difficult to maintain a consistent persona over time.

- Example: Given Klaus’s background and the current time, the LLM might schedule him to eat lunch at 12:00, but then also schedule him for lunch again at 12:30 and 1:00.

-

Solution: Plans describe a sequence of future actions for the agent, helping maintain behavioral consistency. A Plan includes a location, a starting time, and a duration.

-

Like reflections, plans are stored in the memory stream and called during retrieval. This allows agents to consider Observations, Reflections, and Plans simultaneously when deciding how to act. Agents can update their plans as needed.

- Planning Generation: Top-down Recursive Decomposition. To avoid robotic or overly repetitive behavior, detail is generated recursively:

- First Step (Broad Strokes): Based on the agent’s characteristics and a summary of the previous day, a daily outline is generated (typically 5-8 blocks).

- Second Step (Decomposition): Breaks the outline into finer task chunks (e.g., breaking “1:00 pm - 5:00 pm writing” into hourly tasks).

- Third Step (Fine-grained): Further decomposition into 5-15 minute micro-actions (e.g., 4:00 pm grab a snack, 4:05 pm go for a walk).

3.1 Reacting and Updating Plans

- Mechanism: Agents operate within an Action Loop.

- Decision Logic: At each time step, an agent perceives the environment and stores observations. It then asks the LLM: “Based on current state and observations, should I continue the current plan or react?”

- Update: If a reaction is triggered (e.g., meeting an acquaintance, discovering a fire), the system regenerates the plan from that point forward.

3.2 Dialogue

Agent-to-agent dialogue is treated as a special type of “reaction.” It depends on:

- Context Summary: A summary of the relationship and current state retrieved from the memory stream.

- Generation:

- Initiator: Generates the first sentence based on their characteristics and goals.

- Receiver: Treats the dialogue as an observation, retrieves relevant memories, and decides how to respond.

- Continuity: Dialogue continues with reference to history until a participant decides to end it.

Comments

Loading comments...